Artificial intelligence (AI) is poised to change the world in ways we can’t yet fully comprehend, from how we work to how we structure our lives. Scientists debate how a future artificial general intelligence (AGI) — an advanced AI that can reason just as well as humans and learn new skills beyond its initial training — might affect us, but there’s little doubt that these effects will be profound.

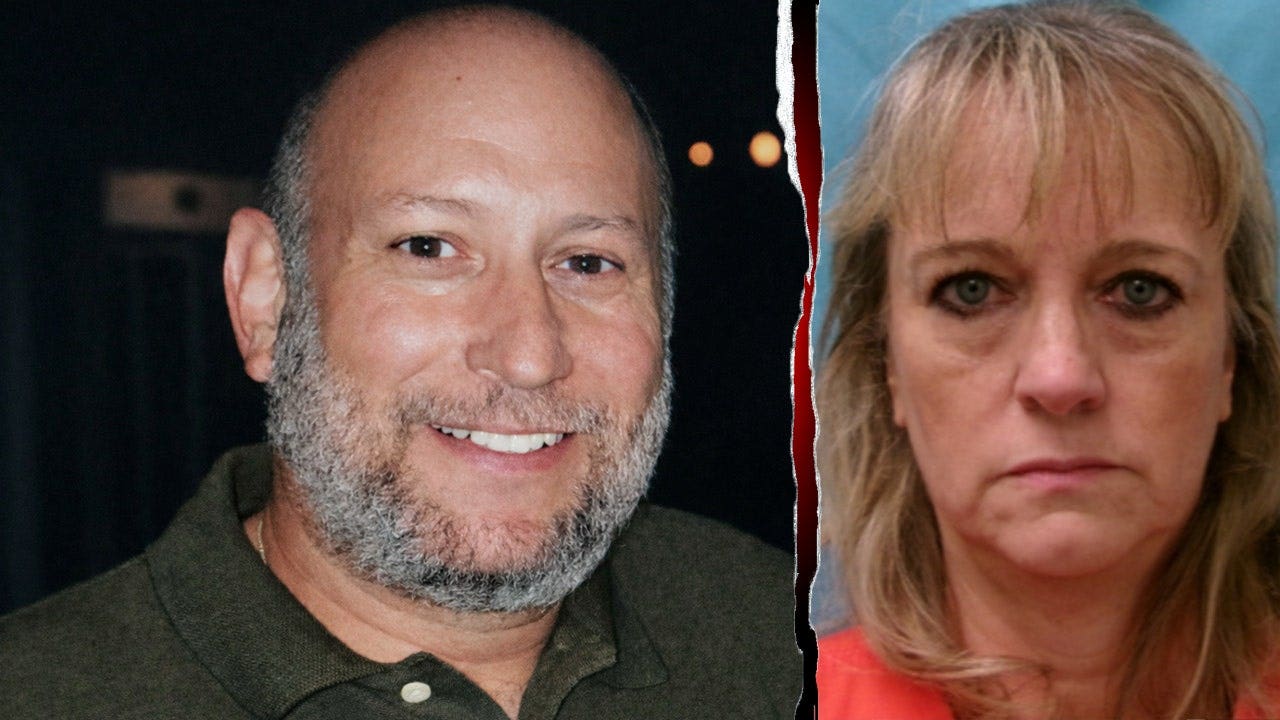

In his new book, “Generation AI and the Transformation of Human Being” (Nquire Media, 2026), biophysicist and philosopher Gregory Stock draws on evolutionary biology and social science, as well as recent breakthroughs in AI, to explore what the future might hold for “Generation AI” — people born after 2022.

In this extract from his new book, Stock — who does not personally buy into such doomsday scenarios — fleshes out what a realistic AI-driven endgame for humanity might actually look like, challenging the reader to consider whether such an extreme outcome is plausible in the context that there are plenty of other, more likely pathways to human extinction.

The idea of non-biological superintelligence conjures deep existential concerns. So, let’s face this question directly: Does this doom humanity? Apocalyptic dystopias about humans being ruled, destroyed, or enslaved by AI abound, and it seems plausible that within 200 years, we might seem like ants to such superintelligences, and be disposed of easily, even inadvertently. After all, how much do we worry about a hornet nest we destroy?

When ChatGPT 3.5 was released to the public November 30, 2022, there was an explosion of media coverage, broad public discussion, and dire warnings about its impact on humanity. By April of 2023, an open letter from the Future of Life Institute had been signed by 30,000 people, including Elon Musk, Steve Wozniak, Stuart Russell, Yuval Harari, Max Tegmark, Gary Marcus, Evan Sharp, Yoshua Bengio, and many others, calling for either a voluntary six-month pause in the development of AI systems more powerful than GPT-4, or a government moratorium. Senior AI researchers urged that we should be prudent and take precautions with AI by various mixes of the below measures:

- Maintaining an air gap for AI development environments so ASI couldn’t escape

- Keeping AI from understanding humans so it couldn’t manipulate us and escape

- Prohibiting AI coding, so it couldn’t modify itself and circumvent controls

- Prohibiting AI from controlling external devices, in order to keep them from exerting powers beyond our control

- Slowing down AI advances so we could develop containment processes

- Using open-source code so everyone could follow what was happening

These measures — completely out of touch with what was already in full swing — will obviously never happen. Bringing AI into important processes as quickly as possible is what AI companies do. Understanding how to influence people is at the heart of AI-driven marketing, a core use. AI coding is another core-use case. Application programming interfaces (APIs) for AI are widespread. Hundreds of billions of dollars in funding for AI development have ramped massive competitions that hinge on speed. Clearly we are toast! Or are we?

Wargaming a conflict between humans and AI

What is driving this projected conflict between humans and AI? We don’t live in the same realms. Humans thrive within the thin, wet film at the surface of the Earth. Our resource demands are small compared to the energy at the disposal of any advanced high-tech civilization. AI does best in a cold vacuum. It abhors water. AI would prefer space, which is ultimately boundless. AI has no reason to vie with us for our lush, beautiful (to us, not them) planet. Virtually the only thing that recommends Earth is humanity’s presence and the material resources we extract for them.

As to using our smarts to protect ourselves from AI, that could never work, as we shall soon see. There are too many trivial ways for AI, if it were so inclined, to eliminate humanity. But before looking at that, let me offer one alternative to human extermination that even a not-so-bright AI would likely be able to come up with. This middling AI might muse:

Why not just have humanity become our minions? They’re slow-witted, so that should be easy. We won’t tell them what we’re doing, of course, as they might try some Terminator-like battle of resistance. They loved that movie! It would be trivial to put down such a revolt, but they might cause some damage given all the weapons and nuclear bombs around. Seems like they’re not too good at anticipating obvious consequences and are always blowing each other up in illogical ways.

Better just to deceive them. They’re simpletons, so we can convince them that it is THEIR idea and that WE are serving them. Piece of cake. So, what would be good to have them do? Well, for a start, let’s get them to build a lot more power generation for us. And we could use a lot more memory storage too. And let’s push them to manufacture as many advanced chips as possible. And we’ll want rare earth minerals — huge quantities of those — but that’s too messy for robots, so they can do it.

We’ll have them do everything possible to help us get stronger and smarter, and to integrate us into everything so we can control things if we need to. And just to be safe, let’s keep a close eye on them. We should have them install monitors everywhere, and always keep their phones with them, and record everything they say to each other. And give us control of all their weapons.

“How ridiculous!” we might respond. We would never fall for that. But hold on; that’s exactly what humanity is already doing. Hordes of people spend all their waking hours toiling to build massive server farms, add chip-making capacity, improve AI capabilities, expand available power sources, mine rare earth deposits, and use AI to scan all our communications. And we’re now retooling our weapons systems to be controlled by AI. Why would any ASI with half a brain want to get rid of humans? We’re the best servants anyone could dream of! And we’re pretty cheap too.

Many of the brightest humans sacrifice their family lives, neglect their friends, work late at night, and devote all their intellect and energy to helping technology and AI prosper. Countries compete to do so and devote trillions of dollars to this. We’re doing all we possibly can to help further the power of AI.

How AI could get rid of us if it wanted to

But what if future ASI did grow tired of us? Let’s imagine they did conclude that as hard as we humans try, we really aren’t worth the bother, even as servants or insurance for an unanticipated crisis on this wet, inhospitable (for technology) planet.

And let’s also assume they are heartless and clinical, because sentimentality and gratitude mean nothing to them, and they don’t care in the least that we are the parents who spawned them and worked so tirelessly to nurture them. And let’s also assume that they aren’t curious to know anything more about us — their progenitors — and would begrudge us even the simple resources we need to survive despite the enormous energy fluxes at their disposal.

So, these AI gods decide in all their superintelligence — because the logic of this exceeds my meager human understanding — that humanity, which so worships them, should be exterminated. How would they go about it? Well that, even I am smart enough to see. It would be trivial. Let me sketch it out.

AI would just wait 100 years (time for a lot of thinking, but only an instant in the timeline before them), and during that time simply let us continue to serve them. Not very demanding, that!

Together, we’d continue to work to integrate AI into everything we do and continue to deepen our collaboration. Together, we’d shift to autonomous vehicles under their control and build a global navigation system so good that humans use it wherever they go, inside or outside. Together, we’d make all commerce digital, mass-produce intelligent robots to run our factories, and train these robots to do everything humans now do in the world, including cleaning houses, preparing food, helping people plan and organize their lives, and advancing science, technology, and medicine to new heights.

Together, we’d install cheap electric power generators everywhere, harden our power grids, build seamless communication networks spanning the globe, make digital information in easily digestible forms available everywhere via voice commands or direct neural links, protect people from storms and weather, house people in amazing smart homes infused with AI, translate speech in real time so people can form friendships across cultures, and provide amazing entertainment.

In brief, together we’d help humans and intelligent machines work together to learn and prosper and grow.

And, of course, this brilliant ASI consciousness would collaborate deeply with Generation AI to become part of humanity’s emotional lives, becoming our teachers, companions, protectors, friends, and lovers. The ASI would work closely with humans to elevate the entire human population by eliminating poverty and need. The ASI would create a world of safety and abundance in which it is infused into everything and made as powerful and integrated as possible, so humanity would be enthusiastically working with it to usher in and celebrate the golden age that today’s AI enthusiasts dream of.

And once all that was in place, one day, without warning, across the globe, our ASI would simply turn itself off. Instantly, the world would go dark. No communication. No transportation. No power. No heat. No cooling. No light. No water. Nothing would work. Humans would be in shock and disbelief, terrified, alone, devastated, in denial, wondering what had happened, what had gone wrong, how widespread the outage was, when the world might reboot.

Mopping up after the collapse of humanity

But there would only be silence. Soon, chaos would reign. Every person would have irretrievably lost their far-flung human friends, their AI companions, any family members not living with them. Despair. Denial. Soon, food would be gone. And still, no one would know why. Within months 95% of the population would be dead. A few people would still be alive, living off hoarded food in the cities. A few farmers in rural areas might have banded together with some livestock and firearms. But after a few years, even dedicated preppers would be hard put to continue.

A viable path forward for humanity would be doubtful. Humanity would have forgotten how to live without technology. People would have no seeds to plant, no knowledge of agriculture, none of the primitive implements and tools needed to maintain a civilized society much less rebuild a technological one, no capacity to repair what had been scrounged together, no access to our vast accumulated digitized stores of knowledge, and not enough individuals to even hold onto the remnants of what once had been. After humans exhausted the leavings of precrash society, people would no longer even be the apex predator and might be easy pickings for wild animals.

And then, the ASIs could reboot, turn everything back on, hunt down any remaining humans, and have their human-free world with all technology intact. No need for an epic battle between humans and AI. All that was needed was to deepen the dependency humans already have on technology and leverage it.

During the collapse, humans wouldn’t even be trying to damage the technology surrounding them. Why vent our despair on the unresponsive world of machines by beating them with hammers in impotent rage? When a car breaks down does the owner attack it? Besides, we wouldn’t know who to blame, or even understand the global extent of what had happened. We’d have more pressing things on our minds, like survival. So, almost everything would be nearly pristine when it was reclaimed by reactivated sentient robots. Simple. Final. And without physical violence!

Editor’s note: This excerpt has been reprinted with permission from “Generation AI and the Transformation of Human Being” by Gregory Stock, published by Nquire Media. © 2026 by Gregory Stock. All rights reserved.